When I first started building what would become Arkana, I had one question: could it actually handle real malware? Not toy samples or contrived demos — proper, nasty, in-the-wild stuff that would give a seasoned analyst a headache. So I threw 14 different samples at it and let it loose. Here’s what happened.

The Test Subjects

I didn’t cherry-pick easy samples. Several were grabbed fresh from Malware Bazaar on the same day they were submitted — meaning nobody had published analysis yet, so there was nothing to crib from. Others were well-known families where I could check my findings against existing research.

The full lineup:

- Ransomware: LockBit 3.0 — the big one

- Remote access trojans: AsyncRAT, ValleyRAT (linked to a Chinese threat group), Elysium RAT, CrySome RAT, and a custom unnamed RAT

- Credential stealers: StealC (two different variants), ACRStealer, SalatStealer

- Red team tooling: Brute Ratel — a commercial attack simulation tool that’s been abused by real threat actors

- Kernel exploits: Trojan.Delshad — a loader that brings its own vulnerable driver to take over the system

- Reverse engineering challenges: Two CTF puzzles, one involving anti-debugging tricks and the other hiding a password inside a neural network

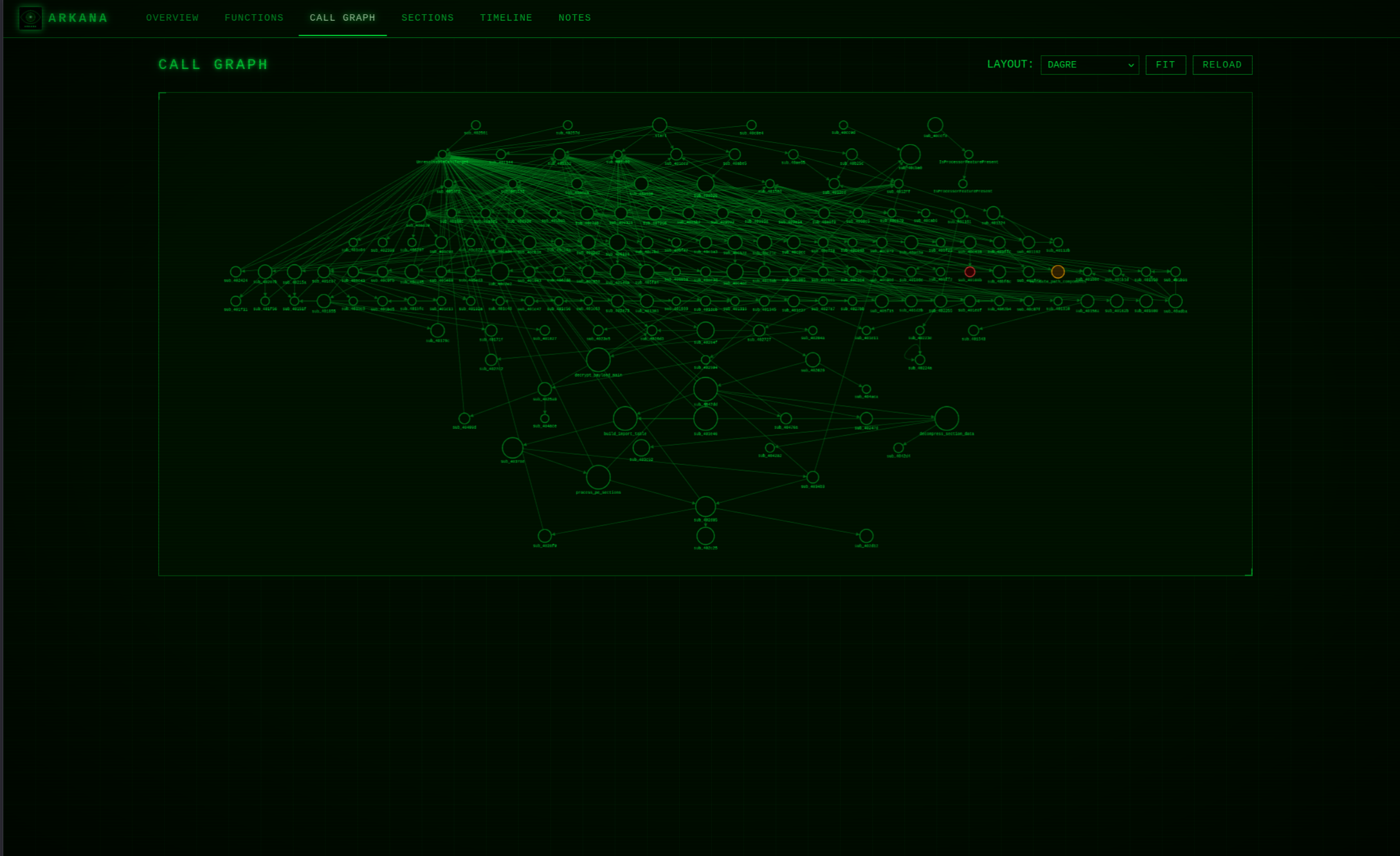

Every single analysis was done entirely through Arkana, using natural language. No manually loading files into Ghidra or IDA, no separate scripts, no jumping between tools. Just me describing what I wanted to know, and the AI picking the right combination of tools to answer.

Peeling Back the Layers

Modern malware almost never arrives as a single, readable file. It’s wrapped in layers of protection — encrypted, compressed, packed inside other files, sometimes all at once. Think of it like a series of locked boxes inside locked boxes. You have to open each one to get to the actual payload.

ValleyRAT had five of these layers. The outermost was a standard compression wrapper, but inside that was encrypted code, a custom configuration pipeline, self-injecting shellcode that disabled Windows security protections on the fly, and finally a bespoke encryption algorithm that nobody had documented before. That last layer needed a custom decryption script to crack.

One of the StealC variants was even more convoluted — seven layers deep, starting with a self-extracting archive, then an obfuscated batch script, then reassembling a program from four separate fragments, then multiple rounds of encryption. Each layer used a different technique. Having over 200 data transformation tools available in one place meant I could chain together decoding, decryption, and decompression steps without switching context.

Breaking Custom Encryption

This is where things got properly interesting. Off-the-shelf malware tends to use well-known encryption that’s straightforward to reverse. But several of these samples had rolled their own.

ValleyRAT used a completely custom encryption algorithm — 16 rounds of mathematical operations that I had to reverse-engineer from the decompiled code and then reimplement in Python. ACRStealer went a different route with a five-step process: substitute each byte using a lookup table, reverse the whole array, swap pairs of bytes, subtract a value, then XOR the result. Each step is simple on its own, but chained together they’re effective at hiding the real data.

SalatStealer used industry-standard encryption (AES-256), but the keys were buried deep in the decompiled code. Arkana’s decompiler pulled them out, and then the decryption pipeline did the rest.

In every case, the workflow was the same: decompile the function, understand the algorithm, then use the built-in data transformation tools to actually decrypt the payloads. No copy-pasting between tools, no writing standalone scripts.

When Reading the Code Isn’t Enough

Static analysis — reading the code without running it — gets you a long way. But some samples fight back.

One of the CTF challenges threw 26 different anti-debugging tricks at us, along with encrypted function pointers that made the code unreadable until you actually executed the decryption routines. Static analysis alone couldn’t crack it. So I used Arkana’s interactive debugger — powered by an emulation engine called Qiling — to step through the code, execute the decryption in isolation, inspect memory, and even patch the program on the fly. It took 151 tool calls across 6 debugging sessions to extract the flag. That kind of analysis simply isn’t possible with a tool that can only read code.

The Go-based malware presented different challenges. Both ACRStealer and SalatStealer were written in Go, which produces enormous binaries with stripped symbol names. Arkana’s Go-specific parser recovered the function names and module structure from both. ACRStealer was particularly sneaky — 1,651 functions, zero visible indicators of compromise, and every system call resolved dynamically at runtime so it wouldn’t show up in a standard import table scan.

How Does This Compare to Other Tools?

I’d be doing you a disservice if I didn’t put this in context. There are now several AI-powered reverse engineering tools out there, and they each have their place.

GhidraMCP is the most popular by a mile (over 8,000 stars on GitHub). It connects the free Ghidra reverse engineering tool to an AI assistant with 27 tools for reading and annotating code — decompile a function, list strings, rename things, add comments. If you already use Ghidra and just want an AI helper for your existing workflow, it’s great. But it’s a read-and-annotate interface. It can’t unpack malware, run signatures, emulate code, or extract indicators. You need Ghidra running with your file already loaded.

IDA Pro has about 10 different AI integrations floating around on GitHub, none of which has really become the standard. They’re all broadly similar — query an IDA database for functions, strings, and disassembly. The bigger issue is that IDA Pro itself costs over £1,300, which puts it out of reach for a lot of people.

Binary Ninja’s headless server is actually the closest competitor in terms of raw capability — 181 tools across 36 categories. It’s genuinely impressive for pure reverse engineering work. But like the others, it’s focused on understanding code structure rather than the full malware analysis workflow, and it needs a Binary Ninja licence.

Radare2’s server is free and powerful, but it works by passing raw commands to the radare2 engine. The AI has to know radare2’s command syntax to use it effectively, which is a lot to ask.

Here’s the thing, though. The decompiler isn’t really the point. IDA and Ghidra both produce excellent decompiled code, and for complex binaries their output will often be cleaner than what angr (Arkana’s decompilation engine) produces. I’m upfront about that.

But decompiling a function is maybe 10% of a malware analysis. The other 90% is unpacking the sample, decrypting payloads, matching it against known signatures, extracting network indicators, understanding how it communicates with its controller, and building a coherent picture from hundreds of data points. That’s where having 289 tools behind one interface — spanning decompilation, signature matching, string analysis, data transforms, emulation, interactive debugging, and similarity matching — makes the real difference.

And it’s free. No licences, no external tools to install. Docker, five commands, go.

The Surprises

A few things genuinely caught me off guard.

The unnamed WinHTTP RAT used a technique called Mixed Boolean-Arithmetic obfuscation. In plain terms, it takes a simple operation like “XOR these two values” and rewrites it as a mathematically equivalent but utterly unreadable mess of additions, multiplications, and bitwise operations. The main command handler was 959 lines long. It would take days to untangle by hand in a traditional tool, but Arkana’s decompiler made it manageable. This one scored 101 out of 100 on the risk scale — breaking the scale felt appropriate.

CrySome RAT was the opposite — completely unobfuscated, all its source code recoverable. And that made it more interesting. We could see exactly how its five-layer persistence worked, including a genuinely novel trick: modifying the Windows Recovery partition so it would survive a full factory reset. It also marked itself as a critical system process, meaning killing it would crash the entire machine with a blue screen. Not subtle, but effective.

The Ternary Trap CTF challenge hid its password inside a neural network — a two-layer mathematical model with weights restricted to just three values: -1, 0, and +1. The password was the output of feeding characters through this model one at a time. No code execution needed. Pure maths, reconstructed entirely from static analysis. The answer? 3ig3nv4lu3 — leet-speak for “eigenvalue.” Nerdy and brilliant.

Where It Falls Short

No tool does everything, and I’d rather be honest about the gaps than pretend they don’t exist.

The decompiler is good but it’s not best-in-class. For heavily optimised code or complex object-oriented programs, IDA Pro and Ghidra will produce cleaner, more readable output. Arkana compensates with breadth — when the decompilation is unclear, you can pivot to emulation, pattern matching, or debugging — but the raw decompilation quality is a genuine gap I’m working on.

Emulation isn’t real execution. Malware that checks for very specific things in its environment — particular registry keys, running services, network configurations — may behave differently in emulation than on a real infected machine. For that, you still want a proper sandbox like CAPEv2 or ANY.RUN running the sample for real. They’re complementary tools, not competitors.

And LockBit 3.0 straight-up won. The packing was so aggressive — near-maximum entropy, self-modifying code, layered encryption on the unpacking stub — that static analysis could only dissect the unpacking mechanism, not the ransomware payload underneath. This is exactly where you’d hand off to dynamic analysis in a sandbox and let the malware unpack itself.

What It All Means

These 14 analyses taught me that the real value of an AI-driven platform isn’t replacing the analyst. It’s removing the friction between having a question and getting an answer. When you’re deep in a ValleyRAT investigation and you spot a custom encryption routine, you shouldn’t have to stop what you’re doing, open a different tool, write a decryption script, and come back. You should be able to say “decrypt this with the key we found” and keep investigating.

That’s what 289 tools behind one interface gives you. Not a magic button, but a platform that keeps you in flow.

All 14 reports are published in full on the Arkana GitHub. Have a read through and see what you think.

Leave a Reply